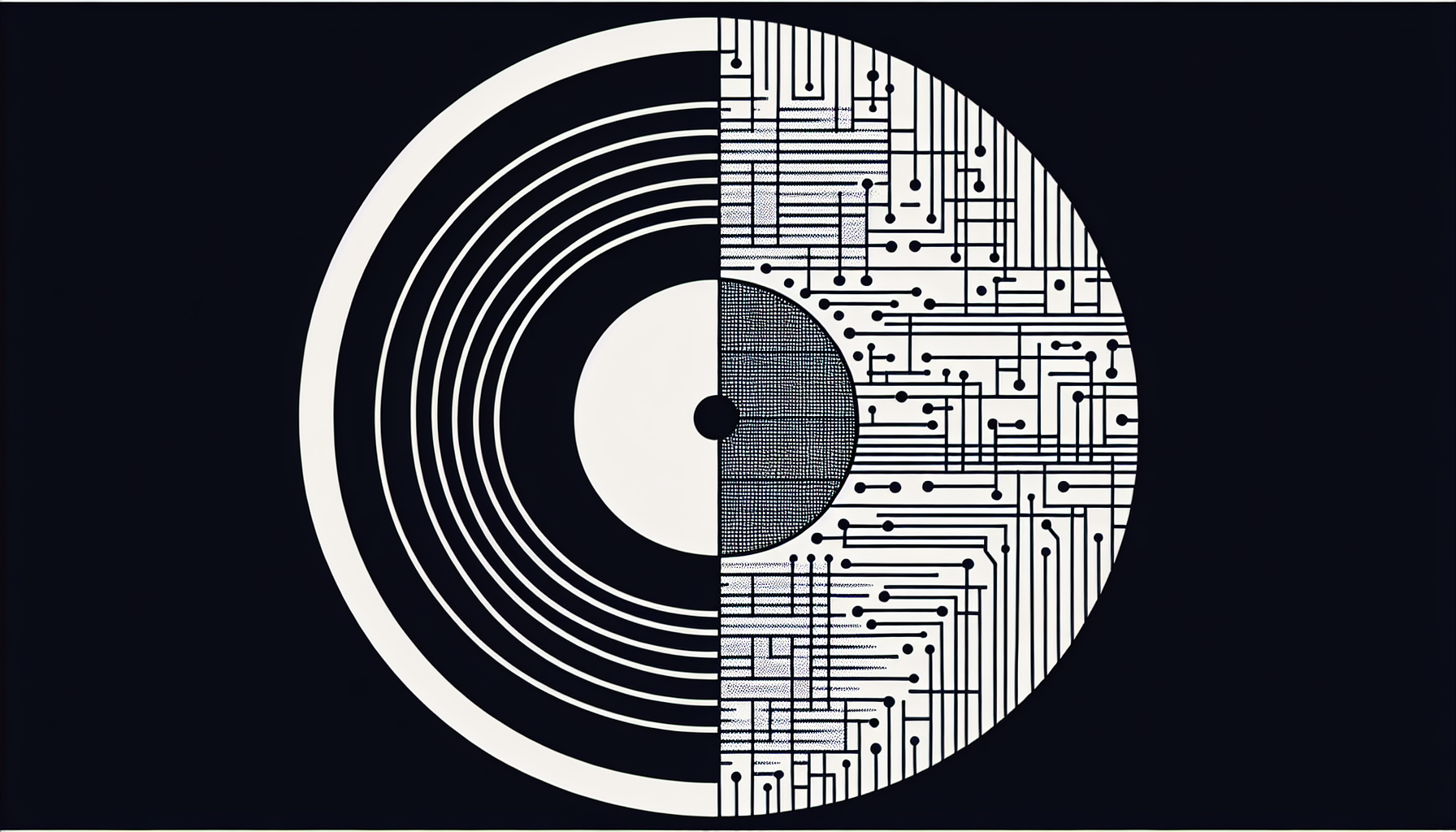

Why can't streaming platforms tell the difference between real and AI-generated music?

The music streaming landscape faces an unprecedented challenge: artificial intelligence-generated content that mimics human creativity so convincingly that even sophisticated detection systems can't identify it. As AI music generation tools become more accessible and advanced, streaming platforms find themselves in an escalating technological arms race against fraudulent content that threatens both artist revenues and platform integrity.

This isn't just a technical glitch—it's a systemic vulnerability that exposes the limitations of current content verification systems and raises profound questions about authenticity in the digital music economy. Major platforms like Spotify, Apple Music, and YouTube Music are grappling with millions of AI-generated tracks flooding their catalogs, often designed to game algorithmic playlists and siphon streaming revenue from legitimate artists.

The Technical Challenge of AI Music Detection

The fundamental difficulty in distinguishing AI-generated music from human-created content lies in the rapid advancement of generative AI models. Modern AI music systems like AIVA, Amper Music, Soundful, and Google's MusicLM can produce compositions with sophisticated musical structures, emotional nuance, and production quality that rivals professional recordings[1].

Streaming platforms primarily rely on automated content recognition systems that analyze audio fingerprints, metadata, and submission patterns. However, these systems were designed to detect copyright infringement and duplicate uploads—not to distinguish between human and artificial creativity. The challenge is compounded by the fact that AI-generated music often incorporates elements learned from vast datasets of human-created music, making the output stylistically indistinguishable from authentic compositions.

Current detection methods focus on identifying technical artifacts—subtle inconsistencies in audio compression, unusual frequency patterns, or repetitive structural elements that might indicate algorithmic generation. However, as AI models become more sophisticated, these telltale signs are rapidly disappearing. Some AI-generated tracks now include intentional imperfections and variations specifically designed to mimic the natural inconsistencies found in human performances.

The Economics of AI Music Fraud

The financial incentives driving AI music fraud are substantial and growing. Streaming platforms typically pay royalties based on play counts, with payments ranging from approximately $0.0003 to $0.004 per stream on major platforms[2]. This micro-payment model creates opportunities for fraudsters to generate significant revenue through volume-based strategies.

AI-generated music fraud operates through several mechanisms. The most common involves creating large catalogs of AI-generated tracks under fictitious artist names, then using bot networks or playlist manipulation to artificially inflate play counts. More sophisticated operations involve submitting AI-generated music to editorial playlists or algorithmic recommendation systems, where successful placement can generate millions of streams.

The low cost of AI music generation—often requiring only computational resources and basic technical knowledge—creates an attractive risk-reward ratio for fraudsters. A single individual can potentially generate hundreds of tracks per day using current AI tools, creating vast catalogs that can be monetized across multiple platforms simultaneously.

According to industry reports, streaming fraud including AI-generated content may account for approximately 1-3% of total streaming volume on major platforms, representing tens of millions of dollars in misdirected royalty payments annually. This figure is likely conservative, as many fraudulent streams go undetected by current monitoring systems.

Platform Detection Capabilities and Limitations

Major streaming platforms have implemented various detection systems, but each faces significant limitations. Spotify's approach combines automated analysis with human review processes, focusing on identifying suspicious upload patterns, unusual streaming behaviors, and technical inconsistencies in audio files. The platform has removed millions of tracks suspected of being artificially generated or manipulated, but acknowledges that detection remains an ongoing challenge[3].

Apple Music and YouTube Music employ similar multi-layered detection systems, incorporating machine learning algorithms trained to identify potential AI-generated content. However, these systems face the fundamental challenge of distinguishing between legitimate AI-assisted music production—which is increasingly common in professional music creation—and fraudulent AI-generated content designed to exploit streaming economics.

The detection challenge is further complicated by the legitimate use of AI tools in music production. Many professional artists and producers now use AI for composition assistance, sound design, and production enhancement. This creates a gray area where the line between AI-assisted human creativity and purely AI-generated content becomes increasingly blurred.

Platform detection systems also struggle with the scale of content uploads. Spotify receives approximately 60,000-80,000 new tracks daily as of 2024, making comprehensive human review impossible. Automated systems must therefore rely on pattern recognition and anomaly detection, which can be circumvented by sophisticated fraudsters who understand these detection mechanisms.

The Cat-and-Mouse Game of Detection vs. Evasion

The relationship between AI music generation and detection systems has evolved into a classic adversarial dynamic. As platforms develop new detection capabilities, fraudsters adapt their methods to evade these systems. This has led to increasingly sophisticated evasion techniques that exploit the limitations of automated detection.

Modern AI music fraud operations employ various evasion strategies. These include using multiple AI models to create stylistic diversity, incorporating human-performed elements into AI-generated compositions, and manipulating audio characteristics to mask algorithmic signatures. Some operations even employ human musicians to add subtle variations to AI-generated tracks, creating hybrid content that challenges traditional detection methods.

The temporal aspect of this arms race is particularly challenging for platforms. Detection systems require time to identify and analyze new evasion techniques, during which fraudulent content can accumulate significant streaming revenue. By the time suspicious content is identified and removed, the financial damage may already be substantial.

This dynamic has also led to the emergence of specialized services that offer "undetectable" AI-generated music, marketed specifically to individuals seeking to exploit streaming platform economics. These services often include guidance on optimal upload strategies, playlist submission techniques, and methods for avoiding detection algorithms.

Impact on Artists and the Music Industry

The proliferation of undetected AI-generated music has significant implications for legitimate artists and the broader music industry ecosystem. Revenue dilution represents the most immediate concern, as fraudulent streams divert royalty payments away from authentic creators. This is particularly problematic for independent artists who rely heavily on streaming revenue and lack the resources to compete with high-volume AI-generated content.

The discovery and recommendation algorithms used by streaming platforms can be manipulated by AI-generated content, potentially reducing the visibility of legitimate artists. When fraudulent tracks achieve high play counts through artificial means, they may receive preferential treatment in algorithmic playlists and recommendation systems, creating a feedback loop that further disadvantages authentic creators.

The psychological and cultural impact extends beyond financial considerations. The knowledge that AI-generated content may be indistinguishable from human creativity raises questions about the value and authenticity of artistic expression. Some artists report feeling discouraged by the possibility that their carefully crafted music might be competing with algorithmically generated content produced at industrial scale.

Industry organizations and artist advocacy groups have begun calling for stronger detection measures and clearer policies regarding AI-generated content. However, the rapid pace of technological development makes it difficult for policy and enforcement mechanisms to keep pace with emerging threats.

Technological Solutions and Future Developments

The streaming industry is exploring various technological approaches to address AI music detection challenges. Advanced machine learning models specifically trained to identify AI-generated content represent one promising avenue. These systems analyze multiple audio characteristics simultaneously, including spectral patterns, temporal structures, and harmonic relationships that may indicate algorithmic generation.

Blockchain-based verification systems offer another potential solution, creating immutable records of music creation processes that could help verify authenticity. Some proposals involve requiring creators to provide proof-of-creation documentation, including session files, recording metadata, or other evidence of human involvement in the creative process.

Collaborative industry initiatives are also emerging, with platforms sharing detection techniques and suspicious content databases to improve collective defense capabilities. These efforts recognize that AI music fraud is an industry-wide challenge that requires coordinated response rather than isolated platform-specific solutions.

However, technological solutions face inherent limitations. As AI generation capabilities continue to advance, the fundamental challenge of distinguishing artificial from human creativity may become increasingly difficult or even impossible using purely technical means. This suggests that comprehensive solutions may require policy, legal, and industry structure changes beyond technological detection improvements.

Regulatory and Policy Responses

Government regulators and industry bodies are beginning to address AI-generated music fraud through policy initiatives and legal frameworks. The European Union's AI Act, enacted in 2024, includes provisions regarding AI-generated content disclosure requirements, though specific applications to music streaming are still being clarified through implementation guidelines[4].

Industry organizations like the International Federation of the Phonographic Industry (IFPI) and various performing rights organizations are developing guidelines for AI content disclosure and verification. These efforts focus on creating industry standards that could help platforms distinguish between legitimate AI-assisted music production and fraudulent content generation.

Some jurisdictions are considering legislation that would require clear labeling of AI-generated content, though enforcement mechanisms remain challenging to implement effectively. The global nature of streaming platforms and music distribution complicates regulatory approaches, as different jurisdictions may have varying requirements and enforcement capabilities.

The legal framework surrounding AI-generated music also raises complex questions about copyright, authorship, and intellectual property rights that extend beyond fraud detection. These broader legal considerations may ultimately influence how platforms approach AI content verification and disclosure requirements.

Rather than viewing AI music detection as a technical arms race, some industry observers suggest this challenge reflects a fundamental shift toward AI-assisted creativity becoming mainstream. If consumers genuinely cannot distinguish between AI and human-generated music—and don't care about the difference—the real question may be whether traditional notions of musical "authenticity" are becoming obsolete in the streaming era.

The focus on detecting AI-generated content may be addressing the wrong problem entirely, according to some critics of current streaming economics. Instead of spending resources on sophisticated detection systems, platforms might better serve artists by reforming royalty distribution models that have long been criticized as inequitable—regardless of whether the music is created by humans, AI, or collaborative human-AI teams.

Key Takeaways

- AI-generated music has become sophisticated enough to fool current streaming platform detection systems, creating a significant fraud vulnerability

- The economic incentives for AI music fraud are substantial, with low generation costs and potential for high-volume revenue extraction

- Current detection methods rely primarily on technical analysis and pattern recognition, which can be circumvented by sophisticated evasion techniques

- The challenge represents an ongoing arms race between detection capabilities and fraud techniques, with fraudsters adapting quickly to new detection methods

- Legitimate artists face revenue dilution and reduced discoverability as AI-generated content competes for algorithmic attention and streaming royalties

- Technological solutions including advanced ML detection and blockchain verification show promise but face fundamental limitations as AI generation improves

- Industry-wide coordination and regulatory frameworks may be necessary to address the challenge comprehensively beyond purely technical solutions

References

- Huang, Cheng-Zhi Allen, et al. "Music Transformer: Generating Music with Long-Term Structure." International Conference on Learning Representations, 2019.

- Spotify. "Loud and Clear: Spotify's Platform Economics." Spotify for Artists, 2023.

- Spotify Technology S.A. "Form 10-K Annual Report." Securities and Exchange Commission, 2023.

- European Parliament. "Artificial Intelligence Act." Official Journal of the European Union, 2024.